![]() Use Case Title

Use Case Title

Canteen Tray Detection – Count trays carried by students in a lunch line

![]() Team Members

Team Members

- Aditya Behera

- Shriya Sanjeev Anila

- Adhikari Vaishnav Hemant Samira

- Bera Ritwik

- Bhavthankar Tejas

![]() Use Case Importance

Use Case Importance

Detecting and counting trays carried by students in the lunch line automates cafeteria load monitoring and reduces manual tally errors. This real-time insight helps canteen managers optimize serving speed and plan inventory based on tray usage patterns.

![]() Data Collection and Annotation

Data Collection and Annotation

- Data Collection: Short video clips were recorded in a college cafeteria using a smartphone camera under varying lighting and angles. Frames were then extracted from these videos using a custom Google Colab notebook, resulting in 862 real-time images suitable for object detection training.

- Annotated Classes: Single class: tray.

- Annotation Tool: Roboflow.

- Total Images: 862 (647 train, 129 val, 86 test).

- Sample Images:

![]() Model Training and Validation

Model Training and Validation

- Model & Version: YOLOv8n (nano) from Ultralytics trained on Google Colab.

- Training Details: 100 epochs, batch size 16, image size 640×640, default learning rate (0.01), early stopping (patience=10).

- Augmentations: Mosaic, MixUp, HSV jitter.

- Monitored Metrics: mAP@0.5, precision, recall, training/validation loss.

- Additional Data: Initially, only 647 images were used for training, and performance was suboptimal. To improve results, additional frames were extracted from the source video, expanding the dataset to 862 images total. This significantly enhanced model training and inference performance.

For further details on training a custom model, please refer to this Github link:

![]() Model Deployment and Demo Video

Model Deployment and Demo Video

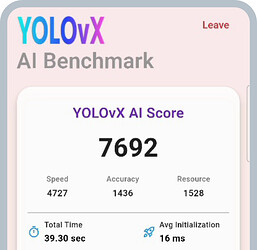

- Performance: ~8 FPS on a mid-range Android device with ~85% mAP@0.5 in live inference.

- Models Deployed: One YOLOv8n model in the YOLOvX mobile app.

- Demo Video: https://drive.google.com/file/d/1jt5IlfRpCQIyiRaXM2eteRxFlDqPGc48/view?usp=sharing

![]() Conclusion

Conclusion

The YOLOv8n model achieved reliable tray detection at real-time speeds on mobile, streamlining canteen operations. Key learnings include the importance of diverse lighting conditions in the dataset and the value of lightweight models for on-device inference.