Use Case Title:

Front and Rear License Plate Detection Using YOLOv8m for Smart Surveillance Systems

Team Members:

- Rupesh Nandale: Led dataset curation and annotation efforts, ensuring high-quality data for training.

- Shivani Parmar: Spearheaded model training and fine-tuning, optimizing hyperparameters for better performance.

- Rudrakumar Patel: Handled deployment and testing on the YOLOvX Android app, focusing on real-time inference.

- Viral Patel: Managed data augmentation and preprocessing pipelines, improving model robustness.

- Yashraj Patil: Coordinated project documentation and created the demo video, showcasing real-world application.

Use Case Importance:

License plate detection is a cornerstone of intelligent transportation systems, enabling applications like traffic regulation, automated toll collection, parking management, and advanced surveillance. Our project focuses on distinguishing between front and rear license plates, a critical feature for ensuring compliance with regional transport laws and enhancing vehicle tracking accuracy in smart cities. By leveraging the lightweight YOLOv8m model, we developed a solution optimized for mobile and embedded systems, allowing seamless real-time detection using smartphone cameras. This approach makes the technology accessible for scalable deployment in resource-constrained environments, such as traffic cameras or handheld devices used by law enforcement.

Our work addresses real-world challenges like varying lighting conditions, diverse vehicle types, and partial occlusions, ensuring robust performance in dynamic scenarios. This use case demonstrates the potential of YOLOv8m as a versatile tool for building practical, AI-driven surveillance solutions.

Objective:

The goal was to design and deploy a deep learning model capable of accurately detecting and classifying vehicle license plates as “Front” or “Rear” using YOLOv8m. The model was trained on a custom dataset tailored for diverse real-world conditions and deployed via the YOLOvX mobile application for real-time inference. Our aim was to achieve high precision and recall while maintaining low latency, making the system viable for mobile-based smart surveillance applications.

Dataset Overview:

Image Acquisition:

To ensure a robust dataset, we combined publicly available images from the Roboflow platform with our own smartphone-captured photos. This hybrid approach allowed us to create a diverse dataset covering:

- Lighting Conditions: Daylight, shaded areas, and nighttime scenarios (with and without flash).

- Camera Angles: Frontal, side, and rear views of vehicles.

- Vehicle Types: Sedans, SUVs, trucks, and motorcycles, representing a wide range of license plate designs.

- Occlusions: Partial obstructions like dirt, shadows, or overlapping objects.

All images were resized to a uniform resolution of 640 × 640 pixels during preprocessing to align with YOLOv8m’s input requirements.

Annotation and Labeling:

- Tools Used: LabelImg for manual annotations and Roboflow for streamlined dataset management.

- Annotation Format: YOLO-compatible .txt files, with bounding box coordinates and class labels.

- Classes Defined:

- Plate: General license plate detection.

- Front: Plates on the front of vehicles.

- Rear: Plates on the rear of vehicles.

Each image was carefully annotated by the team, with cross-verification to ensure consistency and accuracy.

Data Split:

- Training Set: ~470 images (70% of the dataset).

- Validation Set: ~66 images (10% of the dataset).

- Test Set: ~64 images (10% of the dataset).

An additional 10% of images were reserved for iterative testing during development.

Augmentation Techniques:

To enhance model generalization, we applied the following augmentations using Roboflow and Ultralytics tools:

- Horizontal Flip: To account for mirrored perspectives.

- Brightness/Contrast Adjustments: To simulate varying lighting conditions.

- Gaussian Blur: To handle low-quality or noisy images.

- Rotation and Mosaic: To improve robustness against angled views and complex scenes.

These augmentations significantly improved the model’s ability to handle edge cases, such as low-light environments or tilted plates.

Model Training and Configuration:

Model: YOLOv8m (Ultralytics), chosen for its balance of accuracy and efficiency, making it ideal for mobile deployment.

Training Environment:

- Platform: Google Colab with a Tesla T4 GPU for accelerated training.

- Epochs: 100, with early stopping to prevent overfitting.

- Batch Size: 16, optimized for GPU memory constraints.

- Learning Rate: 0.001, with a cosine annealing scheduler for adaptive learning.

- Image Size: 640 × 640 pixels, consistent with dataset preprocessing.

Initial Performance Metrics:

After the initial training phase, we evaluated the model using mean Average Precision (mAP) metrics:

| Class | mAP@0.5 | mAP@0.5:0.95 |

|---|---|---|

| Plate | 0.928 | 0.718 |

| Front | 0.0285 | 0.0085 |

| Rear | 0.116 | 0.040 |

The high mAP for the “Plate” class indicated strong general detection, but the lower scores for “Front” and “Rear” suggested class imbalance and insufficient representative data for these specific categories.

Fine-Tuning and Improvements:

Challenges Identified:

- Low mAP for “Front” and “Rear” classes due to underrepresentation in the dataset.

- Sensitivity to low-light conditions and occlusions.

Fine-Tuning Approach:

- Dataset Expansion: Added ~50 new images per class, focusing on underrepresented scenarios (e.g., front plates in low light, rear plates at steep angles).

- Checkpoint Reuse: Loaded the best.pt weights from the initial training to leverage pre-learned features.

- Hyperparameter Consistency: Retained the original configuration (batch size, learning rate, etc.) to ensure stability.

- Class Balancing: Adjusted loss weights to prioritize “Front” and “Rear” classes during training.

Post-Fine-Tuning Improvements:

The fine-tuned model showed notable gains in performance:

| Class | mAP@0.5 (Before) | mAP@0.5 (After) |

|---|---|---|

| Front | 0.0285 | 0.061 |

| Rear | 0.116 | 0.152 |

While the improvements were significant, we noted that further dataset expansion and targeted augmentations could push performance even higher.

Model Deployment and Testing:

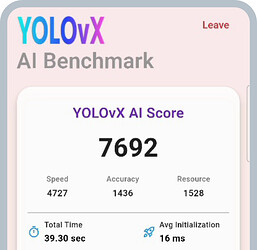

Platform: YOLOvX Android App, designed for seamless integration with mobile devices.

Device Used: Android smartphone with a Snapdragon 720G processor, representing a mid-range device typical for real-world deployment.

Performance Observed:

- Inference Speed: 5–7 frames per second (FPS), suitable for real-time applications.

- Detection Delay: <250 ms per frame, ensuring smooth user experience.

- Scenario Robustness:

- Daylight: Highly accurate, with minimal false positives.

- Low-Light: Moderate performance, with occasional misses in extreme conditions.

- Occlusions: Handled partial obstructions well, thanks to augmentation strategies.

Challenges Encountered:

- Nighttime detection struggled with glare from headlights or reflections.

- Steep-angle plates required additional fine-tuning for optimal detection.

Model Evaluation Summary:

| Parameter | Result |

|---|---|

| Precision (Overall) | Moderate, with strong general plate detection |

| Recall (Post-Tuning) | Improved significantly for “Front” and “Rear” |

| Overfitting | Not observed, thanks to early stopping and augmentation |

| Mobile Latency | <0.3 seconds, ideal for real-time use |

The model’s performance on the test set and real-world scenarios validated its practical utility, though further improvements in low-light detection are recommended for production-grade systems.

Demo Video:

Title: Real-Time License Plate Detection with YOLOv8m

Link: Watch Demo

The video showcases the model running on the YOLOvX Android app, detecting front and rear license plates in real-time across various scenarios, including moving vehicles and static captures.

Conclusion and Key Learnings:

This 10-day internship workshop was an incredible journey into the world of deep learning and computer vision. Our team successfully developed and deployed a YOLOv8m-based model for front and rear license plate detection, achieving real-time performance on a mobile device. Key takeaways include:

- Dataset Quality Matters: A diverse, well-annotated dataset is critical for model accuracy, especially for underrepresented classes like “Front” and “Rear.”

- Fine-Tuning is Powerful: Targeted dataset expansion and iterative training significantly boosted performance.

- Real-World Challenges: Lighting variations and occlusions remain hurdles, but augmentation and fine-tuning can mitigate these issues.

- Mobile Deployment: YOLOv8m’s efficiency makes it a strong candidate for edge-based applications, balancing speed and accuracy.

This project lays the foundation for scalable applications in smart traffic monitoring, automated gate entry systems, and surveillance logging. Future work could explore multi-camera integration, advanced low-light enhancements, and integration with cloud-based analytics for real-time alerts.

We’re grateful for the opportunity to collaborate on this project and contribute to the YOLOvX community. We hope our work inspires others to explore innovative use cases with YOLOv8!

Acknowledgments:

We, Team License Plate Detection, thank those who supported our 10-day internship workshop on Front and Rear License Plate Detection Using YOLOv8m.

We appreciate the Department of Artificial Intelligence and Machine Learning Engineering at St. John College of Engineering and Management (SJCEM) for their guidance. Special thanks to Mr. Sandeep A. Dwivedi, Assistant Professor, for suggesting this training, and Dr. Chandrakant Bothe, industry training advisor, for his project guidance.

We thank Dr. Amruta Mahtre, Head of the Department, and Dr. Kamal Shah, Principal, for their support and for enabling this internship. We also acknowledge the SJCEM management for fostering a practical learning environment.

We are grateful to peers, and the YOLOvX community for their support.

Thank you for contributing to our project’s success.